AI-Native Workflow

Design-to-Code Without the Handoff

Over the last 12 months, every project I've shipped runs through a pipeline where Figma MCP, Claude Code, and Google Antigravity collapse the gap between design intent and working code. Since December 2025, Claude Code and Antigravity have become the primary build environment — eliminating handoffs entirely.

Design → PoC

PoC as part of design delivery — no separate sprint

1:1

Design-to-code fidelity — intent preserved

3 Tools

One pipeline — zero traditional handoff steps

20+ Yrs

PM / UX — now the biggest personal shift

Opening Context

The Boundaries Are Getting Blurry

For 20+ years I did what PM/UX people do: market analysis, user stories, wireframes, clickable prototypes — all designed to communicate intent to engineers. The craft was real, but the communication was indirect. Each handoff introduced drift between what I intended and what shipped.

What's changing isn't the importance of that craft — it's the channel. When design becomes a working proof-of-concept in hours, you stop explaining what you meant and start showing it running.

The Work

Three Tools, One Conversation

Figma MCP Server turns design files into structured data — tokens, component hierarchies, assets — extracted programmatically. No redlining, no PDF annotations. The first translation step disappears.

Claude Code transforms that structured spec into working components. It reads relationships between spacing tokens and layout intent, between color roles and interaction states. Refinement happens in conversation, not JIRA tickets. Since December 2025, Claude Code has become the primary interface between design intent and production code — not a tool used occasionally, but the default build environment for every project.

Google Antigravity — Google's agentic IDE, in active use since December 2025 — handles multi-platform scaffolding alongside Claude Code. The combination is significant: Antigravity scaffolds the application structure and manages cross-platform targets while Claude Code fills in interaction logic and refines components in real time. Together they compress what used to be a multi-sprint handoff into a single working session.

The pipeline: Figma → MCP extraction → Antigravity scaffolding → Claude Code build → side-by-side refinement. The designer stays in the conversation from ideation to working code.

Impact

The Artifact Is the Conversation

A client-approved functional prototype, built from high-fidelity Figma, delivered as part of design — no separate sprint, no QA cycle. The client reviewed working code on first review. The conversation shifts from "does this look right?" to "does this work?"

This portfolio is a second outcome — designed in Figma, built entirely through this pipeline. The gap between design and what you're reading is zero.

Before

Requirements → wireframes → clickable prototype → dev sprint → QA → 2–4 weeks. Each step a silo. Each handoff a translation.

After

Design → MCP extraction → AI build → working prototype — part of one design delivery. One conversation, no handoffs, intent preserved.

Before

Design intent filtered through each interpreter — developer, QA, PM. "This doesn't match the design" was a post-ship conversation.

After

Designer stays in the loop from Figma to code. Drift is caught in real time against the original design, not after sprint review.

Across premium audio, publishing, and AI systems, the pattern recurs: design tokens solve naming but not translation. The handoff gap persists. This workflow eliminates it entirely.

Reflection

The Role Isn't Changing. The Conversation Is.

The craft of PM and UX — research, requirement analysis, systems thinking — matters more now, because the distance between decision and implementation is hours, not weeks. Bad decisions ship faster. Good decisions ship better. The judgment still lives with the person. The workflow just removes the friction.

AI Agent Stories

Designing the Reasoning Layer

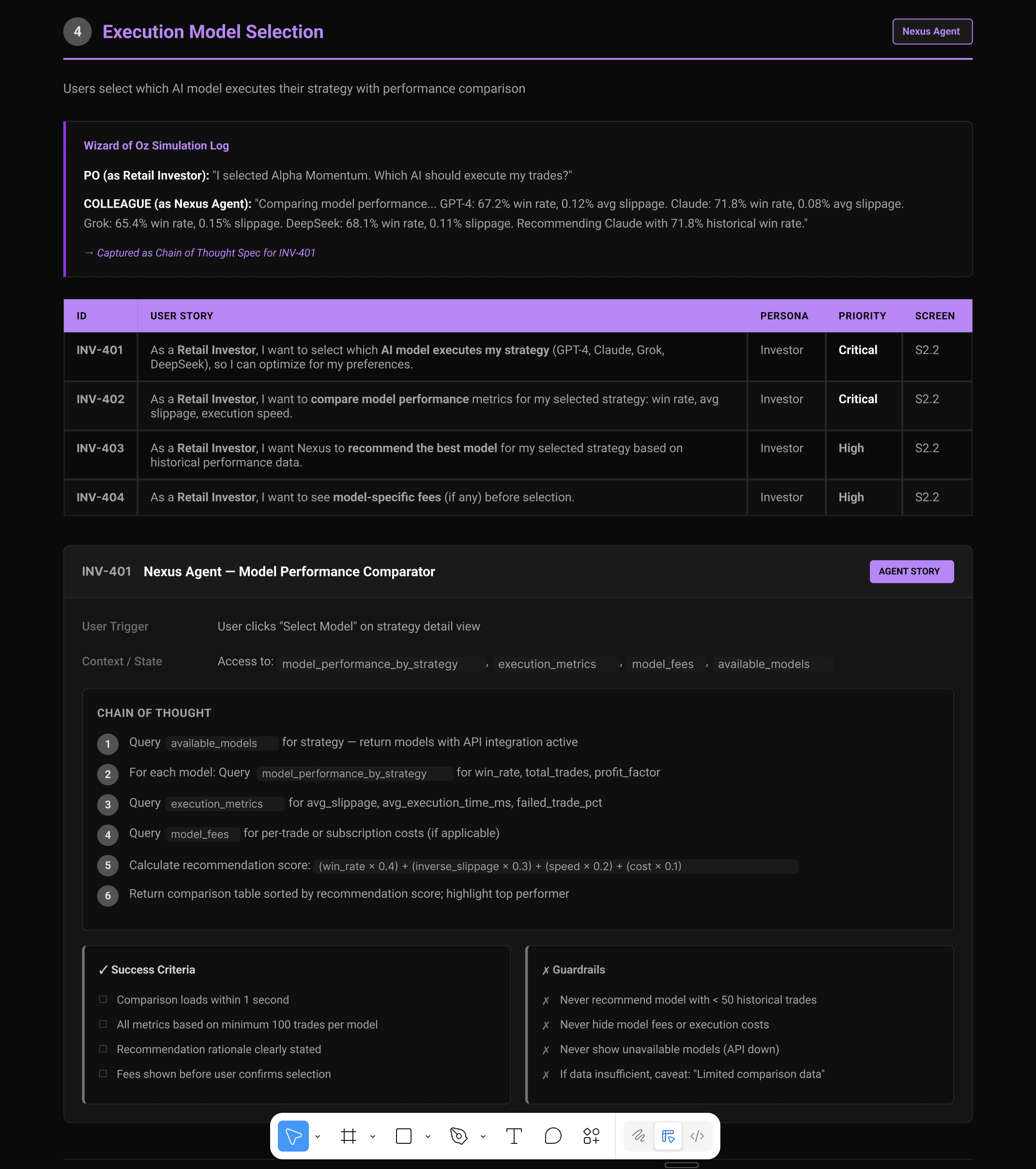

Writing user stories for AI agents exposes a layer of design that traditional UX frameworks don't address: not just what a user needs, but how the agent reasons its way to a response. The Execution Model Selection story below illustrates this in a real context — a retail investor choosing which AI model executes their trading strategy.

Three patterns emerge that every agent workflow must resolve. Chain of Thought makes the agent's reasoning explicit and auditable — a sequence of queries across available models, performance metrics, and fees, ending in a scored recommendation. Human-in-the-Loop defines the moment the agent pauses: the user selects the model, and the agent surfaces comparison data before any execution occurs. Agent Guardrails set the non-negotiables — never recommend a model with fewer than 50 historical trades, never hide fees, never show unavailable models, always caveat when data is insufficient.

Together these patterns shift agent design from prompt engineering into product thinking: what should the agent reason through, when should it defer, and what must it never do regardless of input.

INV-401 · Nexus Agent — Execution Model Selection · Chain of Thought · HITL · Agent Guardrails

Recognition

- Active — 6 months, every project

- This portfolio — designed in Figma, built through this pipeline

- Client-approved prototype — part of design delivery, first review

- Claude Code · Figma MCP Server · Google Antigravity